Key takeaways

The AI distraction hiding a much older problem

The creative industry has spent the last two years debating what AI can generate. That conversation is worth having — but it has quietly overshadowed a problem that predates large language models by decades: most creative teams are still drowning in avoidable revision cycles, and no amount of generative tooling fixes that.

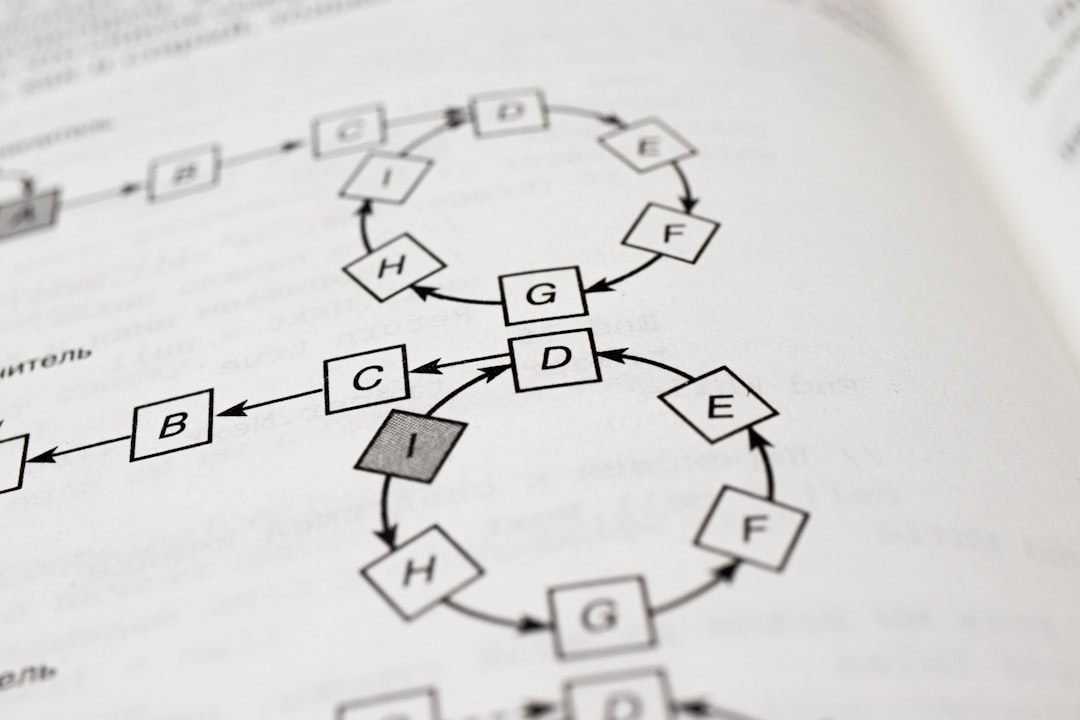

The reason revision cycles spiral isn't that reviewers lack creativity or miss obvious problems. It's that feedback arrives in fragments — a comment in an email thread here, a voice note there, a sticky note forwarded as a screenshot — and nobody can see the full picture. When feedback is invisible or scattered, it gets repeated. Decisions get relitigated. Work that was signed off in round two reappears as a question in round five.

AI shortcuts can speed up the drafting phase. They do almost nothing to fix the approval process that follows it.

Why creative revisions keep multiplying (and it's not what you think)

Creative revision cycles multiply for one consistent reason: the feedback loop is broken, not the creative output. This distinction matters enormously, because it determines where you invest your energy.

Most teams instinctively try to improve their output — tighter briefs, better references, more detailed moodboards — in the hope that better inputs mean fewer corrections. Sometimes they help at the margins. But if the review process itself has no structure, even a perfectly executed first draft will accumulate revisions as stakeholders pile on at different stages, without context for what's already been discussed.

Three patterns tend to drive most of the damage:

None of these problems are solved by an AI tool that generates cleaner copy or sharper visuals. They are structural failures in how teams manage the review process.

How structured feedback loops actually cut revision rounds

A structured feedback loop reduces creative revisions by giving every reviewer a defined role, a clear window for input, and a record of what they said. Teams that implement structured proofing workflows typically report cutting their revision rounds by 30–40% within the first quarter of adoption — not because the creative work improved overnight, but because duplicate and contradictory feedback dropped sharply.

The mechanics are straightforward:

Define who reviews and in what order

Sequential or staged review — where specific stakeholders sign off before the work moves to the next group — eliminates the "too many cooks" problem. A copywriter shouldn't be receiving brand-compliance feedback at the same time as the legal team is raising tone-of-voice concerns. Stage your reviewers and the conflicts between their comments largely disappear.

Attach feedback to the specific element it refers to

Inline, contextual comments — pinned directly to the part of the design or document being discussed — are far more actionable than summary emails. A comment that says "the hierarchy feels off" attached to a specific paragraph is useful. The same comment in the subject line of an email thread is not.

Record every decision, not just every comment

This is where most teams fall short. They capture the comment but not the resolution. When a decision is made — to change the headline, to keep the colour, to defer the layout question — that decision needs to be logged against the version it applies to. Without that record, the same conversation resurfaces when anyone new joins the thread.

GoProof is built around exactly this principle: every comment, annotation, and approval decision is tied to the version it was made on, so the history of the work is always visible and never has to be reconstructed from memory or email archaeology.

Version control in design is not the same as saving files

Version control in creative work means maintaining a clear, accessible record of what changed, when, and why — not just having a folder of files labelled "final", "final_v2", and "FINAL_USE_THIS_ONE". That folder structure is almost universal, and it is almost universally useless when a dispute arises.

Proper version control in a design review workflow does several things that file naming cannot:

This last point is particularly valuable in agency-client relationships, where senior stakeholders often enter the approval process only at the final stage. Without visible version history, their first instinct is frequently to question decisions that took hours to negotiate two weeks earlier. A clear audit trail makes that much less likely.

Why "just use AI" doesn't solve the approval problem

The appeal of AI in creative workflows is understandable. If a tool can catch errors, suggest alternatives, or flag inconsistencies automatically, that should reduce the number of corrections a human reviewer needs to make. In theory, fewer errors mean fewer revisions.

In practice, the approval process isn't primarily about catching errors. Most revision requests aren't corrections — they're preference changes, scope creep, or the result of stakeholders who weren't aligned before the work started. An AI tool that produces a grammatically perfect, visually consistent asset doesn't prevent a client from changing their mind about the direction, or a new decision-maker from wanting to revisit the colour palette.

The revisions that actually kill creative timelines tend to be structural and relational, not technical. They happen because:

AI doesn't address any of these. A well-designed proofing workflow does.

What a better design review workflow looks like in practice

A practical, revision-reducing design review workflow doesn't require expensive tooling or a complete process overhaul. It requires discipline around a small number of habits:

Teams using GoProof typically build these habits into the platform itself — staged review rounds, annotation threads, and approval statuses are part of the workflow structure rather than an afterthought bolted onto email.

Fixing the workflow is the lasting fix

AI tools will keep improving, and some of them will genuinely help creative teams work faster. That's worth embracing where it makes sense. But the revision cycle problem is older than the tools trying to solve it, and it won't disappear because the drafting phase got faster.

The teams that consistently run fewer revision rounds aren't the ones with the most sophisticated generative tools. They're the ones with the clearest feedback loops, the most disciplined review sequences, and the most visible audit trails. That combination — structured process, contextual feedback, and version history that actually works — is where the real efficiency gains are.

Workflow is the fix that lasts. AI is just noise until you have that in place.

Frequently asked questions

Even a detailed brief doesn't prevent revision inflation if the review process has no structure. Revisions multiply when feedback is scattered across channels, reviewers comment simultaneously without seeing each other's input, or decisions aren't recorded against the version they apply to. The brief reduces misalignment at the start; structured proofing reduces it throughout the approval process.

A design review workflow is a structured process that defines who reviews creative work, in what order, and how their feedback is captured and resolved. It matters because unstructured review processes — where feedback arrives from multiple stakeholders simultaneously with no audit trail — are the primary cause of avoidable revision cycles in creative teams.

Version control in design links feedback and approval decisions to specific versions of the work, so the history of what changed and why is always visible. This prevents late-arriving stakeholders from relitigating settled decisions, stops comments from one round being confused with comments from another, and gives teams a clear record of what was approved and when.

No. AI proofing tools can catch technical errors like spelling mistakes or brand inconsistencies, but most revision requests are preference changes, scope adjustments, or the result of stakeholders who weren't aligned before the work started. These are structural and relational problems that structured feedback loops and review sequences address far more effectively than automated error-checking.

Most creative projects should require no more than two to three structured revision rounds when the brief is agreed upfront and the review process is properly staged. Teams that run unstructured reviews — with no defined sequence, no version-specific feedback, and no decision log — routinely accumulate five or more rounds on work that was fundamentally sound from the first draft.